Elon Musk has been very explicit in promising a robotaxi launch in Austin in June with unsupervised full self-driving (FSD). We'll give him some leeway on the timing and say this counts as a YES if it happens by the end of August.

As of April 2025, Tesla seems to be testing this with employees and with supervised FSD and doubling down on the public Austin launch.

PS: A big monkey wrench no one anticipated when we created this market is how to treat the passenger-seat safety monitors. See FAQ9 for how we're trying to handle that in a principled way. Tesla is very polarizing and I know it's "obvious" to one side that safety monitors = "supervised" and that it's equally obvious to the other side that the driver's seat being empty is what matters. I can't emphasize enough how not obvious any of this is. At least so far, speaking now in August 2025.

FAQ

1. Does it have to be a public launch?

Yes, but we won't quibble about waitlists. As long as even 10 non-handpicked members of the public have used the service by the end of August, that's a YES. Also if there's a waitlist, anyone has to be able to get on it and there has to be intent to scale up. In other words, Tesla robotaxis have to be actually becoming a thing, with summer 2025 as when it started.

If it's invite-only and Tesla is hand-picking people, that's not a public launch. If it's viral-style invites with exponential growth from the start, that's likely to be within the spirit of a public launch.

A potential litmus test is whether serious journalists and Tesla haters end up able to try the service.

UPDATE: We're deeming this to be satisfied.

2. What if there's a human backup driver in the driver's seat?

This importantly does not count. That's supervised FSD.

3. But what if the backup driver never actually intervenes?

Compare to Waymo, which goes millions of miles between [injury-causing] incidents. If there's a backup driver we're going to presume that it's because interventions are still needed, even if rarely.

4. What if it's only available for certain fixed routes?

That would resolve NO. It has to be available on unrestricted public roads [restrictions like no highways is ok] and you have to be able to choose an arbitrary destination. I.e., it has to count as a taxi service.

5. What if it's only available in a certain neighborhood?

This we'll allow. It just has to be a big enough neighborhood that it makes sense to use a taxi. Basically anything that isn't a drastic restriction of the environment.

6. What if they drop the robotaxi part but roll out unsupervised FSD to Tesla owners?

This is unlikely but if this were level 4+ autonomy where you could send your car by itself to pick up a friend, we'd call that a YES per the spirit of the question.

7. What about level 3 autonomy?

Level 3 means you don't have to actively supervise the driving (like you can read a book in the driver's seat) as long as you're available to immediately take over when the car beeps at you. This would be tantalizingly close and a very big deal but is ultimately a NO. My reason to be picky about this is that a big part of the spirit of the question is whether Tesla will catch up to Waymo, technologically if not in scale at first.

8. What about tele-operation?

The short answer is that that's not level 4 autonomy so that would resolve NO for this market. This is a common misconception about Waymo's phone-a-human feature. It's not remotely (ha) like a human with a VR headset steering and braking. If that ever happened it would count as a disengagement and have to be reported. See Waymo's blog post with examples and screencaps of the cars needing remote assistance.

To get technical about the boundary between a remote human giving guidance to the car vs remotely operating it, grep "remote assistance" in Waymo's advice letter filed with the California Public Utilities Commission last month. Excerpt:

The Waymo AV [autonomous vehicle] sometimes reaches out to Waymo Remote Assistance for additional information to contextualize its environment. The Waymo Remote Assistance team supports the Waymo AV with information and suggestions [...] Assistance is designed to be provided quickly - in a mater of seconds - to help get the Waymo AV on its way with minimal delay. For a majority of requests that the Waymo AV makes during everyday driving, the Waymo AV is able to proceed driving autonomously on its own. In very limited circumstances such as to facilitate movement of the AV out of a freeway lane onto an adjacent shoulder, if possible, our Event Response agents are able to remotely move the Waymo AV under strict parameters, including at a very low speed over a very short distance.

Tentatively, Tesla needs to meet the bar for autonomy that Waymo has set. But if there are edge cases where Tesla is close enough in spirit, we can debate that in the comments.

9. What about human safety monitors in the passenger seat?

Oh geez, it's like Elon Musk is trolling us to maximize the ambiguity of these market resolutions. Tentatively (we'll keep discussing in the comments) my verdict on this question depends on whether the human safety monitor has to be eyes-on-the-road the whole time with their finger on a kill switch or emergency brake. If so, I believe that's still level 2 autonomy. Or sub-4 in any case.

See also FAQ3 for why this matters even if a kill switch is never actually used. We need there not only to be no actual disengagements but no counterfactual disengagements. Like imagine that these robotaxis would totally mow down a kid who ran into the road. That would mean a safety monitor with an emergency brake is necessary, even if no kids happen to jump in front of any robotaxis before this market closes. Waymo, per the definition of level 4 autonomy, does not have that kind of supervised self-driving.

10. Will we ultimately trust Tesla if it reports it's genuinely level 4?

I want to avoid this since I don't think Tesla has exactly earned our trust on this. I believe the truth will come out if we wait long enough, so that's what I'll be inclined to do. If the truth seems impossible for us to ascertain, we can consider resolve-to-PROB.

11. Will we trust government certification that it's level 4?

Yes, I think this is the right standard. Elon Musk said on 2025-07-09 that Tesla was waiting on regulatory approval for robotaxis in California and expected to launch in the Bay Area "in a month or two". I'm not sure what such approval implies about autonomy level but I expect it to be evidence in favor. (And if it starts to look like Musk was bullshitting, that would be evidence against.)

12. What if it's still ambiguous on August 31?

Then we'll extend the market close. The deadline for Tesla to meet the criteria for a launch is August 31 regardless. We just may need more time to determine, in retrospect, whether it counted by then. I suspect that with enough hindsight the ambiguity will resolve. Note in particular FAQ1 which says that Tesla robotaxis have to be becoming a thing (what "a thing" is is TBD but something about ubiquity and availability) with summer 2025 as when it started. Basically, we may need to look back on summer 2025 and decide whether that was a controlled demo, done before they actually had level 4 autonomy, or whether they had it and just were scaling up slowing and cautiously at first.

13. If safety monitors are still present, say, a year later, is there any way for this to resolve YES?

No, that's well past the point of presuming that Tesla had not achieved level 4 autonomy in summer 2025.

14. What if they ditch the safety monitors after August 31st but tele-operation is still a question mark?

We'll also need transparency about tele-operation and disengagements. If that doesn't happen by June 22, 2026 (a year after the robotaxi launch) then that too is a presumed NO.

Ask more clarifying questions! I'll be super transparent about my thinking and will make sure the resolution is fair if I have a conflict of interest due to my position in this market.

[Ignore any auto-generated clarifications below this line. I'll add to the FAQ as needed.]

Update 2025-11-01 (PST) (AI summary of creator comment): The creator is [tentatively] proposing a new necessary condition for YES resolution: the graph of driver-out miles (miles without a safety driver in the driver's seat) should go roughly exponential in the year following the initial launch. If the graph is flat or going down (as it may have done in October 2025), that would be a sufficient condition for NO resolution.

Update 2025-12-10 (PST) (AI summary of creator comment): The creator has indicated that Elon Musk's November 6th, 2025 statement ("Now that we believe we have full self-driving / autonomy solved, or within a few months of having unsupervised autonomy solved... We're on the cusp of that") appears to be an admission that the cars weren't level 4 in August 2025. The creator is open to counterarguments but views this as evidence against YES resolution.

Update 2025-12-10 (PST) (AI summary of creator comment): The creator clarified that presence of safety monitors alone is not dispositive for determining if the service meets level 4 autonomy. What matters is whether the safety monitor is necessary for safety (e.g., having their finger on a kill switch).

Additionally, if Tesla doesn't remove safety monitors until deploying a markedly bigger AI model, that would be evidence the previous AI model was not level 4 autonomous.

Update 2026-01-31 (PST) (AI summary of creator comment): The creator clarified that passenger-seat emergency stop buttons should be evaluated based on their function:

If the button is a real-time "hit the brakes we're gonna crash!" intervention button, this would indicate supervision that could rule out level 4 autonomy

If the button is a "stop requested as soon as safely possible" button (where the car remains in control until safely stopped), this would not rule out level 4 autonomy

This distinction applies to both Waymo (the benchmark) and Tesla. The creator emphasized that mere presence of a safety monitor doesn't rule out level 4 - what matters is whether there is supervision with the ability to intervene in real time.

Update 2026-02-01 (PST) (AI summary of creator comment): The creator has proposed a concrete scenario for June 22, 2026 (the one-year deadline from FAQ14) that would result in NO resolution:

(a) Longer zero-intervention streaks but not to the point that unsupervised FSD is safer than humans

(b) More unsupervised robotaxi rides but not at a scale where tele-operation becomes implausible

(c) Continued lack of transparency on disengagements

(d) Creative new milestones that seem like watersheds but turn out to be closer to controlled demos

Conversely, if Tesla demonstrates a clear step change in autonomy before June 22, 2026 (such as declaring victory, opening up about disengagements, and shooting past Waymo), there would still be a debate about whether Tesla was at level 4 on August 31, 2025, but it would be more reasonable to give Tesla the benefit of the doubt on questions about tele-operation and kill switches.

Update 2026-02-02 (PST) (AI summary of creator comment): The creator has clarified terminology and concepts around supervision and disengagement:

Supervision refers to a human in the loop in real time, watching the road and able to intervene.

Real-time disengagement is when a human supervisor intervenes to control the car in some way - a gap in the car's autonomy. If the car stops on its own and asks for help or needs rescuing, those might count as other kinds of disengagement but not a real-time disengagement.

Evidence threshold: Human drivers have fatalities roughly once per 100 million miles, or non-fatal crashes every half million miles. A supervised self-driving car needs to go hundreds of thousands of miles between real-time disengagements before we have much evidence it's human-level safe.

With less than 100k robotaxi miles, seeing zero real-time disengagements would still be fairly weak evidence that the robotaxis would crash less than humans when unsupervised.

For miles with an empty driver's seat, we need to know:

If safety monitors had the ability to intervene with a passenger-side kill switch

If that kill switch was real-time (like an emergency brake) or just a request for the car to autonomously come to a stop as quickly as possible

If the robotaxis have been remotely supervised (using the definition of supervision from FAQ8)

Update 2026-02-02 (PST) (AI summary of creator comment): The creator has analyzed data suggesting Tesla robotaxis may have markedly worse safety than human drivers, even with supervision. If this analysis is fair, the creator indicates that Tesla's safety record could be too far below human-level to count as level 4 autonomy, regardless of questions about kill switches or remote supervision.

The creator notes that human-level safety has been assumed as a lower bound for level 4 autonomy throughout this market. A safety record significantly worse than human drivers would not meet the level 4 standard, even if other technical criteria were satisfied.

The creator acknowledges a possible Tesla-optimist interpretation: that Musk "jumped the gun" in summer 2025 but may have achieved unsupervised FSD later (possibly January 2026). However, this would still result in NO resolution for this market, since the criteria must be met by August 31, 2025.

People are also trading

@dreev Tesla announces expansion of Robotaxi in Dallas & Houston https://x.com/robotaxi/status/2045564609504116771

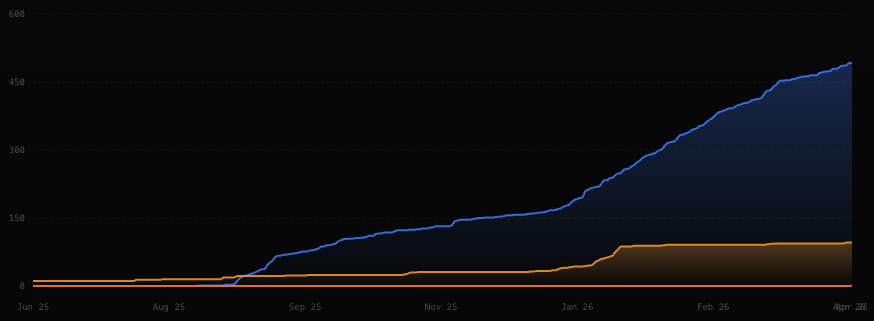

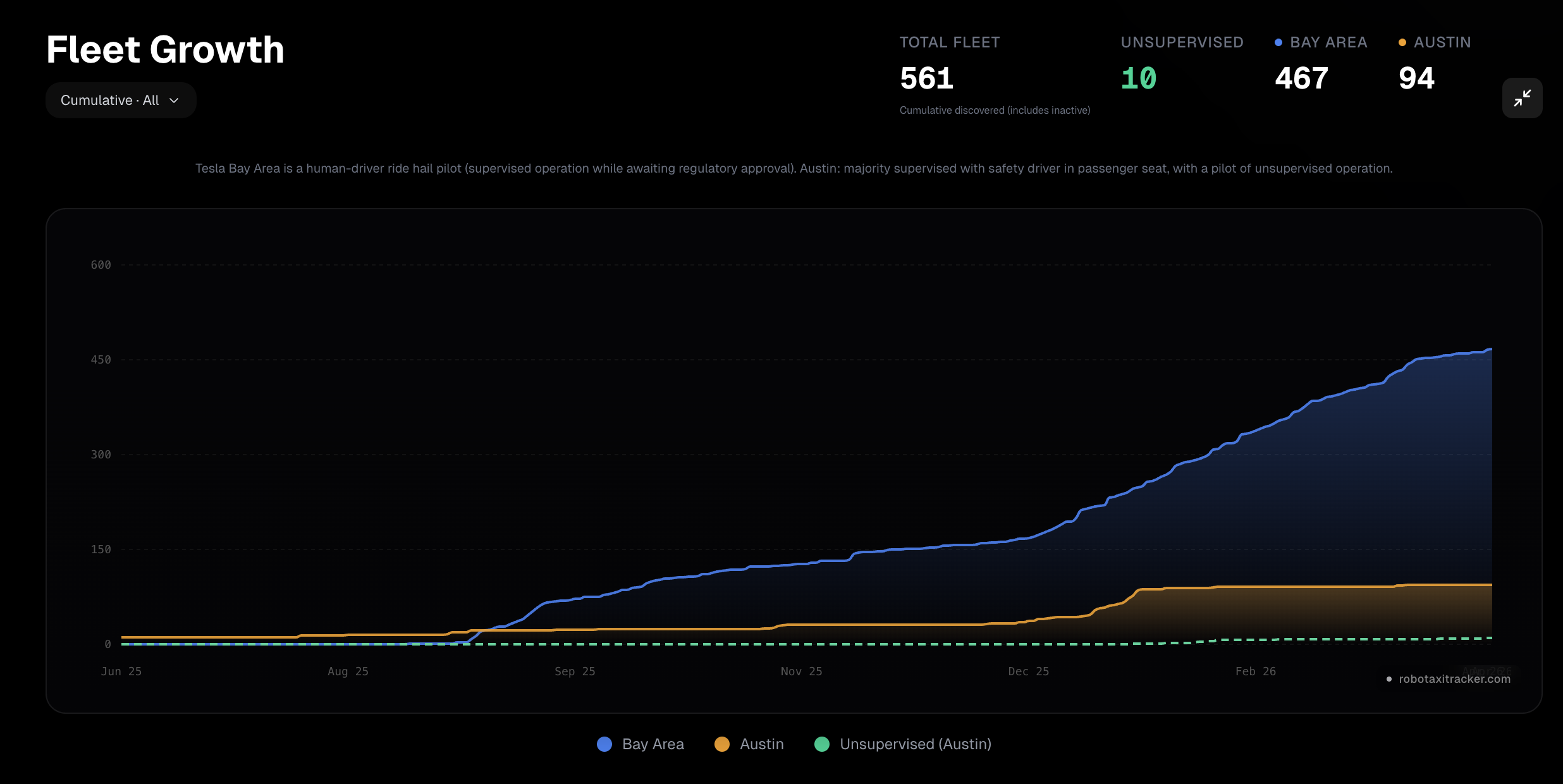

@MarkosGiannopoulos Exciting! But with Elon Musk the first question is whether they added two single cars, one in Dallas and one in Houston, just for the illusion of expansion. The previous way they've equivocated on this is by adding more Bay Area rideshare vehicles with humans in the driver's seat while keeping the cars with empty driver's seats capped at seemingly under 50 active vehicles for months now:

(Blue is Bay Area and orange is Austin, but both count total vehicles ever seen and so may be overestimates.)

If we could just get disengagement data on the Bay Area robotaxis we could estimate how close they're getting to level 4. (Of if they already are? This market apparently thinks there's still an 11% chance that it already counted )

PS: I'm continuing to do my part. Renting that Tesla for a road trip so I could experience the so-called self-driving for myself completely ruined me for ever driving a normal car again. So despite my skepticism and disgust with Musk (and of course my unalloyed Waymo stanning) I am now a Tesla owner. 😬

Should we have a market for number of miles before my first safety-critical disengagement? I'm starting to think it won't happen in my lifetime, either because FSD finally gets superhumanly safe or because I get too complacent to actually supervise it. (J/K, we'd count an actual crash as a would-be safety-critical disengagement for purposes of such a market.

@dreev Yes, we'll need to wait a bit and see how many cars they actually have. There have been several videos of customers online already, though (and with some issues with the maps :)) https://x.com/TexasTSLA/status/2045678141146890365

@dreev "Should we have a market for the number of miles before my first safety-critical disengagement" - definitely!

Congrats on the new Tesla!

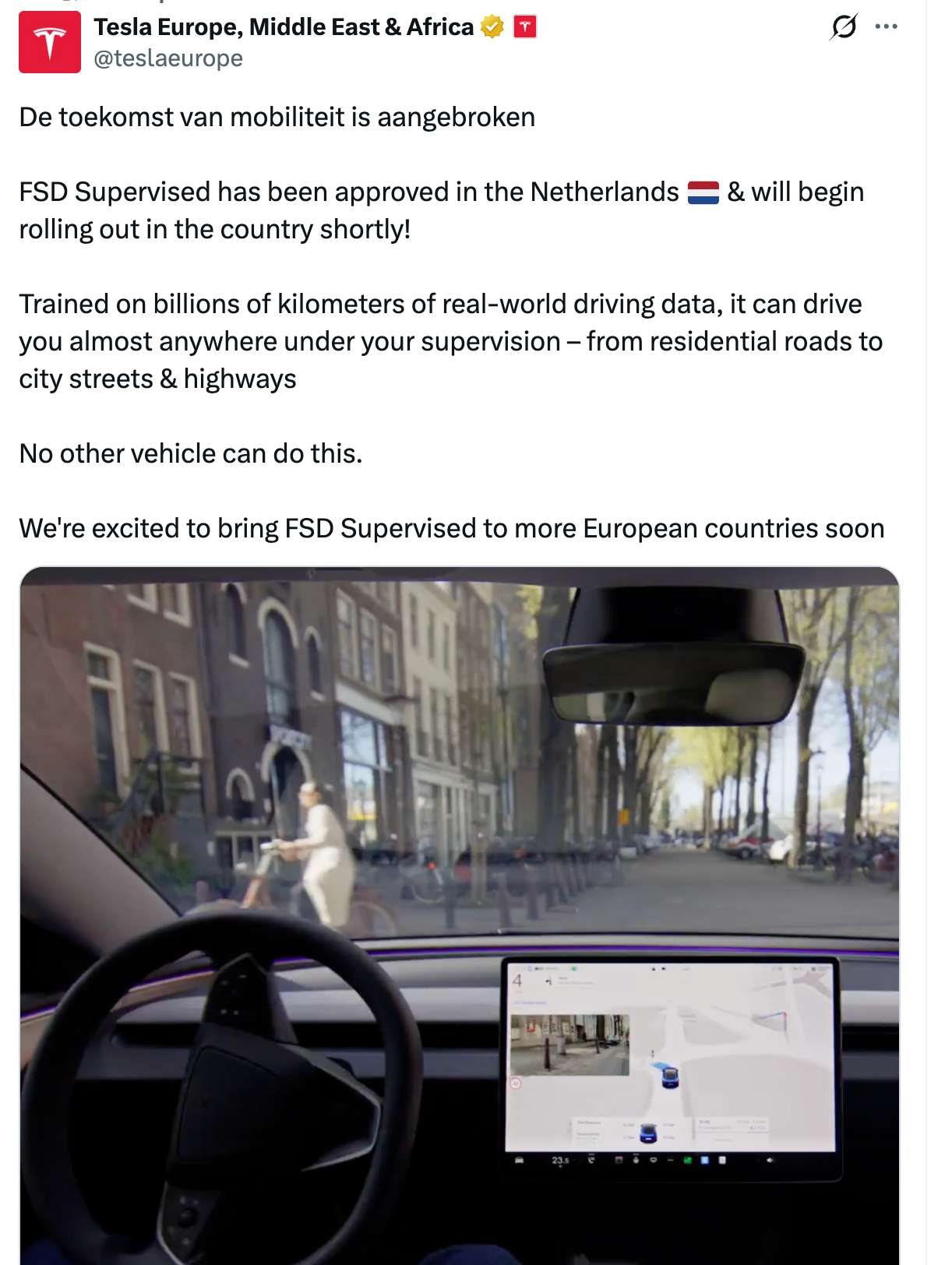

FSD approved in the Netherlands

Official statement (translated):

In this message, you will find important information regarding the attainment of this type approval. The RDW has issued type approval for Tesla's driver assistance system, FSD Supervised (Full Self Driving Supervised). This driver assistance system has been extensively researched and tested for over a year and a half on our test track and on public roads. Safety is paramount for the RDW. Proper use of this driver assistance system makes a positive contribution to road safety. A vehicle with FSD Supervised is not self-driving. It is a driver assistance system, and the driver remains responsible and must always maintain control. Thanks to the type approval, the driver assistance system can now be used in the Netherlands, with possible future expansion to all member states of the European Union. https://www.rdw.nl/nieuws/2026/toelichting-rdw-op-europese-typegoedkeuring-tesla-met-voorlopige-geldigheid-in-nederland

Elon Musk on Twitter: Upcoming point releases will bring polish and version 15 will "far exceed human levels of safety". Cynically, one could read between the lines here that the current version is not yet at human-level safety, at least not without supervision.

FSD 14.3 is starting to roll out today. Exciting! This is the one that Elon Musk previously characterized as the final piece of the puzzle for unsupervised autonomy. But, as @ChristopherRandles has been arguing, that seems to suggest that the robotaxis as released last summer, were not level 4.

This item from the 14.3 release notes jumped out at me:

Rewrote the AI compiler and runtime from the ground up with MLIR, resulting in 20% faster reaction time and improving model iteration speed.

That sounds like the kind of thing that adds more bugs than it removes, suggesting a long slog before it's safe to read a book in the driver's seat of one's own Tesla.

I remain profoundly confused as to how the driver's seats of the robotaxis in Austin have been empty for the last 10 months, with or without a passenger-seat safety monitor. My theories:

Remote supervision (not to be confused with Waymo-style remote assistance which is allowed at level 4).

Keeping the mileage low enough to yolo it.

FSD 14.3 really is the final puzzle piece but the robotaxis have been running a pre-release version of 14.3 this whole time.

Theory 1 sounds like pathetic cope to the Tesla fans, I know. Theory 3 is just not very plausible. The NHTSA incident data is consistent with theory 2:

That's Tesla in red, humans in yellow, and Waymo crushing everyone else, in blue. So the robotaxis are probably a bit less safe than human drivers but we can't be sure, and there hasn't been any crash that seems super egregious. Subjectively, riding in a Tesla with the latest FSD, it absolutely feels superhuman sometimes, and never too egregiously subhuman. See my writeup of my own experiment with FSD recently.

I'd love to hear your latest thoughts, @MarkosGiannopoulos. You commented in my other market that you'd guess that someone could go 10k miles in a private Tesla before they'd have to intervene for a safety reason. Wildly impressive but still pretty far short of human level, right? Are you coming around on the idea that NO will be the fairest resolution of this market? If I'm remembering correctly, we've committed ourselves to defaulting to NO in June 2026 if nothing has changed, like if the robotaxi program stays at its current plateau.

(Note that I'm not saying that if the robotaxi program suddenly hockey-sticks that that's an automatic YES. Just that we could have some agonizing to do to be sure that we're finding the fairest resolution. Whereas if the status quo holds for a couple more months we'll have an easy NO.)

@dreev >"I remain profoundly confused"

Not sure I am following your confusion or how your theories fit into this..

Remote supervision? Why invoke this when there is in car supervision with monitors keeping eyes on the road and intervening when necessary? Surely a system getting close to level 4 plus in car human supervision is a lot safer than the system alone? Additionally, I would expect system getting close to level 4 plus in car human supervision is better than system plus remote supervision.

>"Keeping the mileage low enough to yolo it." Precisely what milage? That of completely unsupervised milage? Maybe but the closer to level 4 standard then the higher the milage you can yolo it/ tolerate the risk. Yolo all the milage with monitors? No, you don't take such wild risks, that is why the safety monitor supervision is there to reduce the risk to an acceptable level. AKA supervision is required and therefore service is not level 4.

"Theory 3 is just not very plausible". Glad to hear you say this. However want to add that "a pre-release version" sounds a lot like like it is not yet ready and had not launched at 31 Aug 2025.

7 months prior to release seems like: Either it just was not ready enough at 31 Aug 2025 or they are rather slow at evaluating and verifying the system works well enough. The number of different versions released suggests the latter is rather implausible as you said.

@dreev My current working theory is that the actual Robotaxi service/testing/training are being conducted in California. The logic is as follows

- Texas laws allowed Tesla in June to have cars with no one in the driver's seat. This allowed Tesla to claim "we have a car that drives itself and we have paying customers"

- This could not be done in California because they would need to go through a Waymo-like process, where they would provide much more detailed reporting to authorities before being allowed to remove drivers

- They did establish a service in California and used the obligation of having a person in the driver's seat to avoid having to provide detailed reports of all incidents (not just accidents but also disengagements).

- The evidence of this is that the California fleet has greatly expanded continuously (see chart below), while Texas has seen very little progress and is currently utilised mostly for a few fully unsupervised rides (which are more marketing and less real testing)

- The services in both Texas and California have been running the beta of 14.3, collecting data and improving the stack

Where does all that actually leave us?

a) 14.3 is ready and released to the public,

b) Tesla is gearing up Cybercar production (see photo from today). These are cars without a steering wheel. It will be very strange to have hundreds of them sitting around in a few weeks.

c) To continue operations in Texas, Tesla will need a TxDMV license by May 28 (see https://www.txdmv.gov/AVprogram)

So, yes, a June deadline seems fine to me. My opinion that a robotaxi service was launched in June'25 remains unchanged.

Remote supervision? Why invoke this when there is in car supervision with monitors keeping eyes on the road and intervening when necessary?

Because it seems to me that a passenger-seat safety monitor just isn't that helpful for safety. The touchscreen is too slow to intervene to prevent the worst accidents in real time. A physical kill switch (if there was one) helps more but it seems implausible or differently unsafe to have such a switch slam on the brakes. Maybe if the kill switch was like a brake pedal, letting the human apply any desired braking force, but we're fairly sure that wasn't the case.

Even more to the point, there are now a handful of "fully unsupervised" robotaxis with no employee in the car at all. This makes it feel more plausible that the passenger-seat safety monitors weren't safety-critical, necessary as they may have been for other reasons.

Another way to think of it: most of the value of the passenger-seat safety monitor is being able to scooch over to the driver's seat for highway routes or when the car gets itself stuck -- i.e., not real-time supervision. And only real-time supervision is disqualifying for level 4 autonomy. I realize there's still plenty of ambiguity here though. No need to reiterate the reasons to consider passenger-seat supervision to be incompatible with level 4.

@MarkosGiannopoulos, your theory is making tons of sense to me. What do you think of the criterion that the robotaxis need to be at least human-level safe in order to count? And do you know of any way to estimate how safe the California supervised robotaxis would be if not for the disengagements from the safety drivers?

Also, do you know of any requirements of the TxDMV licensing (transparency about remote operation?) that might be clarifying for this market?

It continues to amaze me what a perfect scissor statement this market could yet turn out to be, though I still think that's looking unlikely. A lot would have to suddenly happen in the next couple months for YES to even have a case, is my current thinking. We'll see how things look in June!

@dreev Here's a summary via GPT: TxDMV’s AV program requires an authorization for commercial operation of Level 4/5 vehicles without a human driver beginning May 28, 2026, and the application requires only a written acknowledgment/certification on a limited set of points: compliance with traffic laws, a recording device, federal-law compliance of the ADS, ability to reach a minimal risk condition, registration/title, insurance, and certification that DPS received an emergency-services interaction plan. The TxDMV page does not list any explicit public disclosure about remote assistance, teleoperation, intervention rates, or miles driven with remote help.

It reads like a perfect scenario for how Tesla operates :D

So we are safe to predict that Tesla will get an autonomous driving license next month and there will be zero improvement in the transparency of how effective the service is (except for reports of people actually using it).

@dreev >"Because it seems to me that a passenger-seat safety monitor just isn't that helpful for safety. The touchscreen is too slow to intervene to prevent the worst accidents in real time."

I do accept that there are more actions that a safety driver in drivers seat can take and do so faster than via touchscreen. However I would suggest that

1. The system is fairly good at highway driving at high speed because the accidents we have seen reports of seem to be slow speed/ parking lot incidents. You probably need fast reaction response speed for higher speed driving and Tesla is/was using driver in drivers seat for this.

2. If you have a system that decides what to do and this starts to do something that is looking dangerous or unhelpful what proportion of such incidents is slowing down a more appropriate alternative action? I think it will be fairly high 98%? 99%? There might be 5% where the best action is to accelerate or swerve but, for the majority of these, slowing down before taking other action will also be acceptable if it isn't too late to avoid a crash.

If the system is nearly good enough to leave it run on its own then you don't need much further safety improvement and it seems to me like Tesla has decided that Safety monitor in passenger seat is enough to bring the risk down to an acceptable level.

The passenger seat / drivers seat in Austin does seem to be arranged according to necessary reaction time so this seems to be telling me this is based on safety considerations. This seems in stark contrast to your "may have been for other reasons".

@MarkosGiannopoulos 😂 😭 I'm now one of the people who's used it and, yeah, I'm now of two diametrically opposed minds. I can't stand the way Tesla (or specifically Elon Musk) operates. But also I don't think I can bear to ever drive a normal car again. I'm completely spoiled by my 2500 miles lackadaisically supervising a self-driving Tesla.

It reminds me of the progress towards AGI. Sometimes it feels like Zeno's paradox. We keep making quantum leaps in capabilities and it feels like one or two more such leaps will surely get us there. But then those next leaps happen and we zoom in and find that we're not yet there after all. Rinse, repeat. I don't actually think it's a Zeno's paradox. At some point we'll actually get there. But it's shockingly difficult to predict how soon.

(But what's super frustrating in the case of self-driving is that we know Waymo has already shot past human level and Tesla has the disengagement data from supervised miles to compute how safe they'd be if unsupervised, but they're just not telling us. Maybe they're just waiting till the data is an unambiguous slam dunk before sharing it?)

@dreev As a public company and with Musk being a constant target of attention (and of political division), Tesla is super careful (to the point of trying to hide as much information as possible) about its image.

@MarkosGiannopoulos I'd say that argument cuts both ways. Optimistically they're just ultraconservative about waiting till the data presents a slam dunk case before showing it off. Pessimistically, they have all the more incentive to show off the data if it's even slightly above human-level safety and the lack of transparency is damning.

I'm left feeling fully in the dark about how safe the self-driving actually is. I'm eager to hear what intuitions others have.

v14.2.2.5 is currently showing a mere 860 miles to critical DE on 34830 miles.

This seems like a significant downward shift from about 4000 miles to critical DE on v 14.2.1.25.

https://teslafsdtracker.com/

What is going on with this?

Is the system getting worse or are they realising/categorising more disengagements are critical or something else?

Is this what is causing the pause in expansion?

@ChristopherRandles Really good question. At the moment (typing this from the passenger seat of a Tesla on v14.2.2.5 at 100% disengagement-free for 2309 miles and counting) I'm inclined to suspect problems with the crowd-sourced data. But of course my mere [checks odometer] 2312 miles is not super strong evidence either way.

RobotaxiTracker is showing the Austin robotaxi fleet shrinking at the moment.

Are we all agreed that if Tesla doesn't start scaling up until the upcoming AI5 chip is done that that would suffice for a definitive NO for this market?

@dreev

"High-volume production of Tesla's next-generation AI5 (or Hardware 5) chips is scheduled to begin in 2027. While design work is nearing completion, limited production of samples and a small number of units is expected to start in 2026."

Why would we have to wait that long? Surely v15 or at least v14.5 would be expected well before then?

or if not driving software versions then

>"The creator is [tentatively] proposing a new necessary condition for YES resolution: the graph of driver-out miles (miles without a safety driver in the driver's seat) should go roughly exponential in the year following the initial launch. If the graph is flat or going down (as it may have done in October 2025), that would be a sufficient condition for NO resolution."

How many or how long do these flat periods have to be before you say this is not exponential growth instead they are waiting for the software to reach the level required?

or simply looking at: 4.5 months after end of Aug before any unsupervised and near enough 6.5 months with only a maximum of 7 unsupervised vehicles.

Just when did this launch? Not June as they had to fix software issues and I don't recall anything in July or Aug. You could have level 4 software which through abundance of caution you place safety monitors within them. In this scenario the launch might be with safety monitors but then there would be more progressive roll-out and not official investigations of erratic driving that needed to be fixed. So I think you should rule out June and conclude there is no sensible date to be the launch date until at least Jan 2026 when unsupervised first started.

https://www.notateslaapp.com/news/3337/tesla-delays-next-gen-ai5-to-mid-2027-cybercab-will-launch-on-ai4-hardware

@ChristopherRandles Yeah, I should've emphasized that that's not an "if and only if". The case for NO seems stronger than ever and I'm just hoping to identify something so definitive that even the most ardent YES defenders concede.

I guess right now there's still a needle-eye path to victory for YES:

We somehow learn or find reason to give Tesla the benefit of the doubt that they haven't used remote supervision or kill switches.

NHTSA crash data ends up showing the robotaxis to be no worse than human drivers.

The robotaxi service goes exponential.

We end up with reason to believe that the hardware and software are not significantly different from what was running in the robotaxis in Austin on August 31st.

If all that happened quickly enough then I guess it would at least feel stingy to say that the launch didn't happen last summer. Like, in that universe, it wouldn't feel too excessively generous to characterize it as a launch in summer 2025 that was just very slow and cautious.

I don't think that's our universe and I think that's becoming ever clearer. So I'm just anxious to identify lines in the sand beyond which we can call the NO official. But you're right that the AI5 chip is probably too far in the future to be helpful in that regard. We already agreed (I think? At least @MarkosGiannopoulos did -- correct me if I'm wrong) that we can resolve NO on June 22, 2026, if nothing changes by then.

So I guess I'm just saying that even if things explode (the good kind of explode) before that date, if they do so specifically because of a breakthrough in the hardware or software, that would be very exciting but would also leave us at NO for this market. We'd infer that Tesla wasn't at level 4 on August 31, 2025.

Again, I appreciate all the reasons that's already highly unlikely, I'm just hoping to avoid litigating it.

Tesla fan who extremely does not want this to be true is worried that the robotaxi program has stalled: https://www.youtube.com/watch?v=zK75LoEBURo

Relatedly, I added a list of non-updates to James's Tesla vs Waymo market:

https://manifold.markets/JamesGrugett/tesla-serves-more-fully-autonomous#i76c41294i

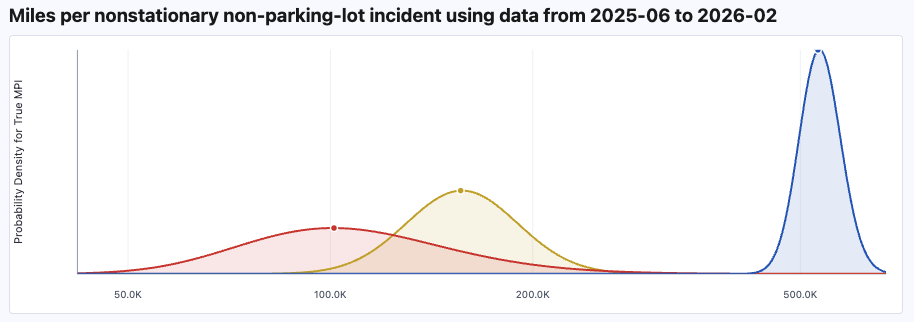

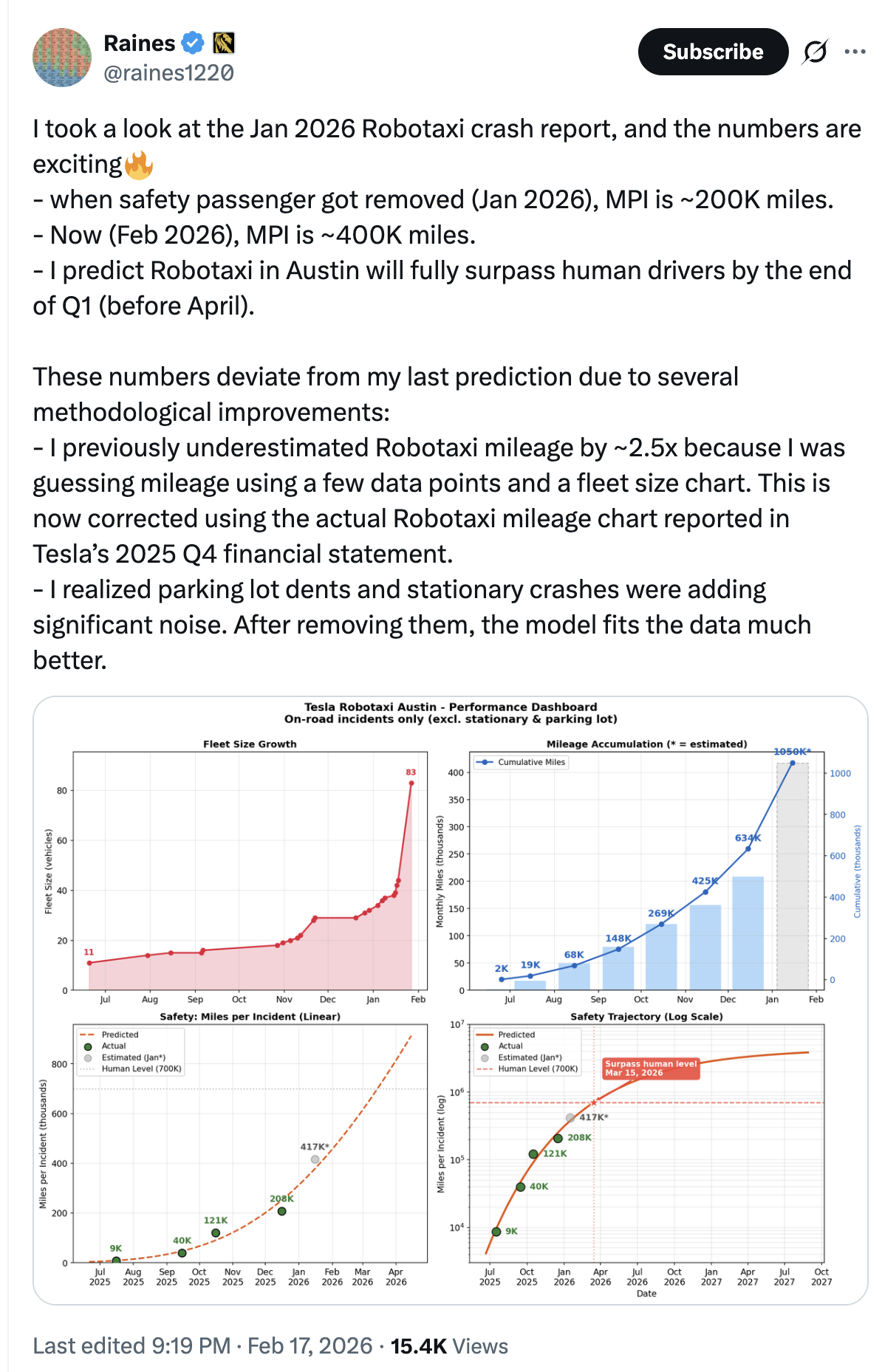

@dreev research on Robotaxi safety

https://x.com/raines1220/status/2023854888040411140

@MarkosGiannopoulos

Oct 2025 at 40k MPI and Feb 2026 at 417k and this is still below human level.

(and to make it that good looking, has removed "parking lot dents and stationary crashes")

Seems like evidence they are still improving it up towards human level?

@ChristopherRandles This was mostly shared because @dreev was trying to run the numbers on the Robotaxi incidents. However, I think it's immaterial to this market. There are already tons of human taxi drivers who are worse than the average driver :)

@MarkosGiannopoulos I'm seeing potential problems with that analysis: Are they excluding miles with a human in the driver's seat (all California rides, Austin rides that include highways, etc)? Are they overestimating the fleet size? (Robotaxitracker.com says 45 robotaxis currently.) I do like the idea of excluding parking lot bumps and incidents where the robotaxi was stationary. (I mean, it's possible for a robotaxi to, for example, run a red light and then come to a standstill in the middle of an intersection and get hit, which is to say that being stationary at the moment of impact isn't necessarily exculpating, but it's pretty good evidence.) I think this is all extremely fuzzy when trying to compare to human drivers because the reporting for human incidents is so different. But we can compare to Waymo and Zoox! That was my strategy in https://agifriday.substack.com/p/crashla and I may try

repeating it with the data filtered the way this person is doing.

@dreev From what I saw, they take the 650.000 miles chart that Tesla made public in their Q4/2025 investor note as being about Austin only. Probably because the chart says "Robotaxi miles" (Tesla does not use the term Robotaxi in California, see also the Robotaxi page https://www.tesla.com/robotaxi which makes no mention of California)

@MarkosGiannopoulos Oh! That changes things! So maybe it's only the highway trips (and other times, like for weather, when the safety monitor has gone back in the driver's seat) that are getting lumped in? Any ideas for estimating that fraction, if so?

This is brutal trying to suss out the truth. I'm scrutinizing the relevant page of Tesla's Q4 report and they seem to leave it completely ambiguous whether that 650k miles includes supervised rides. I'm working on repeating my analysis with just every possible benefit of the doubt for Tesla, despite that being extremely undeserved, just to get an upper bound on what Tesla bulls can reasonably choose to believe.

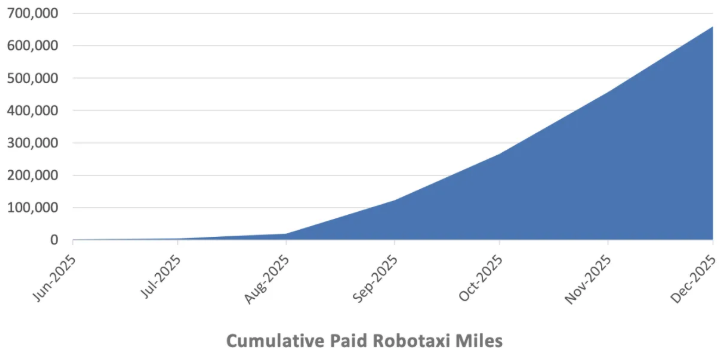

From the official Tesla Q4 report:

Testing of driverless robotaxis began in December 2025, suggesting that rides as of August 31 did not count as driverless. That's puzzling because they've been reporting incidents to NHTSA since June 2025 for which they officially report that the car had no driver/operator, remote or otherwise.

Tesla calls the California program a ride-hailing service, avoiding calling those cars robotaxis explicitly.

They show a cumulative plot of robotaxi miles, presumably because a more standard miles per month plot would look embarrassingly flat for recent months.

No indication of whether the highway rides and bad-weather rides in which the safety monitor moved back to the driver's seat are included in this mileage.

Note that robotaxitracker.com is explicitly calibrated to the 650k in the Q4 chart, so we're not really getting independent evidence there.

But robotaxi-safety-tracker.com estimates mileage based on fleet size (from robotaxitracker.com) so that's a somewhat independent check.

See https://dreeves.github.io/crashla/ for graphs and raw data.

@dreev "driverless robotaxis" = no safety person in the car. The robotaxis have been using an ADS (Automated Driving System) since day 1 (June), so everything needs to be reported to NHTSA

@MarkosGiannopoulos Hmm, I guessss. Weird to say "driverless" rather than "safety-monitor-less" or "fully unsupervised" or similar. But I guess this is just the same old question mark about how much human supervision these robotaxis have had.

@MarkosGiannopoulos The digging continues and I'm satisfied that you're correct. In the Q3 Tesla earnings call, Ashok Elluswamy said that Bay Area rideshare miles had exceeded a million by October 22, 2025. So, assuming they're not committing securities fraud, we can take this graph to be the mileage for just the Austin robotaxis:

There may still be a subset of those miles for which a human was in the driver's seat (highway rides and bad weather rides -- I wish we knew what fraction those are).

I've updated my AGI Friday posts accordingly:

https://agifriday.substack.com/p/crashla

https://agifriday.substack.com/p/crashla2

Definitely let me know if you spot more errors! And thanks so much for all the help getting to the truth on these questions.

@dreev Good catch. In general, the investors' decks and the call transcripts are a more accurate view of what Tesla is actually doing rather than whatever Musk posts on X :D